Summary

Engineers at WillowTree have been using AI tools like GitHub Copilot and Chat-GPT since they first arrived on the scene. As these tools become more advanced and prevalent, we wanted to explore how exactly they add value to our teams.

To learn more about the effects of AI tools on engineering velocity and product results, WillowTree organized a case study in which two teams built the same product over the course of six days. One team was encouraged to use AI tools such as GitHub Copilot and Chat-GPT in their development process, while the other team didn’t use AI at all.

At the end of the case study, the AI team had completed 11 user stories, while the no-AI team completed 9, indicating that AI tools can help to improve productivity. We found a possible trade-off between velocity and product quality, as the AI team had a few more minor defects in their final product. However, it’s important to note that various confounding variables could have affected the results, discussed in more detail below.

In terms of AI use cases, engineers reported that GitHub Copilot was the most useful tool for daily tasks, significantly speeding up velocity by autocompleting tests and tedious, boilerplate code. Chat-GPT was also helpful, particularly when used to learn about an established technology. However, Chat-GPT hallucinated answers and lacked up-to-date knowledge. For more accurate responses to complex questions, engineers preferred using Phind, an AI-powered developer search engine.

As AI technologies become a more and more prominent part of the developer experience and everyday life, it’s important to try to gain a deeper understanding of how they can affect our workstyle and product outcomes. We are all hearing the hype about tools like GitHub Copilot and Chat-GPT, and WillowTree teams are already using them on most of our projects. But how exactly do they add value to a team, and to what degree? In an effort to measure this, WillowTree recently recruited a few unallocated team members for an “experiment” that would help us learn more about the current state of AI tools, how to best leverage them, and their effects on developer experience and velocity. In this article, I’ve put together some of the most important learnings and takeaways from the experience. Although this case study was a first iteration and our results aren’t quite ready to be carved in stone, I believe we gathered some pretty valuable and interesting insights.

Disclaimer: I was a part of the no-robots team, and in true no-robots fashion, this article is entirely human-generated. (Though I hypothesize that having Chat-GPT write it would have been significantly faster.)

The case study setup

A few weeks ago, two small teams of WillowTree engineers embarked on a mission to answer the question: What is the impact of using AI tools for engineering? Each team was composed of two developers and a test engineer. Led by the same technical requirements manager, they worked on rebuilding an existing weather app in React Native, with a focus on iOS. The teams each had their own Jira Kanban board and backlog, with identical tickets arranged in the same order. The big difference: one team was encouraged to leverage AI tools during their development and testing process, while the other was not allowed to use any AI at all. The robots team was called Team Skynet1, and the no-robots team was Team Butlerian2 (they will be referred to as such from this point on).

| Team Skynet | Team Butlerian | |

Source: Popular Mechanics |  |  Source: Dune Wiki |

The teams had six days to work through the backlog and get as much done as they could. Each team member only had 5 hours per day to work on the project. The timing constraint was intended to make sure the teams worked an equal amount of hours while accounting for other responsibilities they had while unallocated. To keep variables under control as much as possible, the teams also agreed on the same Expo3app setup, and on the same testing framework and test coverage goal. As far as individual package choices were concerned, the teams made their own decisions independently. Repos were set up by each team the day before development began.

With this setup, both teams would be as equal as possible to start and avoid (dis)advantages on either side. Still, it’s worth noting that this case study was not meant to be a rigorous scientific experiment. Rather, it was a way to start investigating the use of AI in engineering at WillowTree and gather some soft findings. In the future, additional studies can incorporate feedback gathered from this round and help validate our results.

And… go!

Teams began development on the same day and had daily standups with the requirements manager to share progress and ask questions. There were also team-specific and shared Slack channels where we could work out any uncertainties that came up. And there were quite a few of those. As development progressed, we had to work through questions about Figma assets, API endpoints, test framework compatibility, time tracking, and ticket order. While we had no norming or grooming sessions as we would on a real project, the teams collaborated to agree on paths forward. As we completed tickets, we noted the amount of time spent on each one. Both teams also wrote about their experience in “dev journals.”

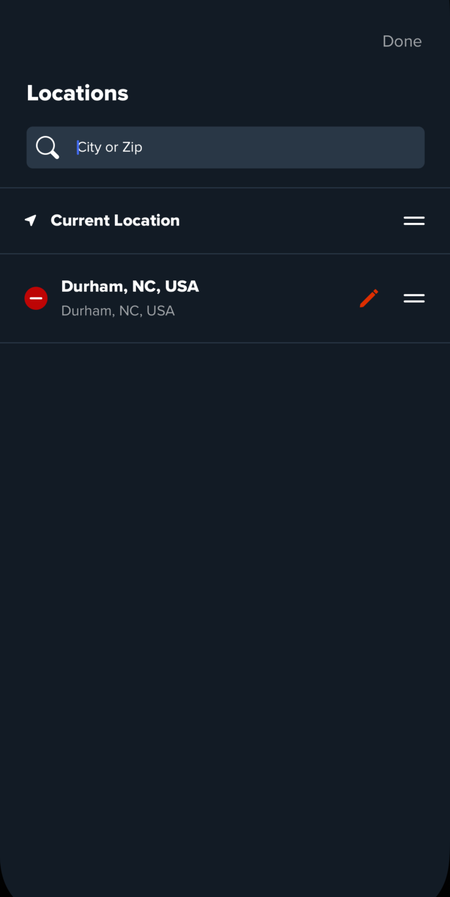

To give you an idea of the work we completed as part of the weather app rebuild, I’ll list some of the main features:

- Bottom-tabs navigator

- Home screen for a user with no weather locations saved

- Location screen where the user could search for and add locations

- Locations list with data retrieved from local storage

- The ability to turn on “current location”

- Loading and error states

- Most importantly, dark mode 😎

|  |

Rebuilding the weather app was a pretty fun challenge and required a good variety of technologies for which we could test the powers of AI (animations, navigation, local storage, theming, SVGs, APIs, testing, and other good stuff). But before we knew it, it was the end of day six and time to draw some conclusions. Where did both teams stand?

Just the facts

Team Butlerian had 9 completed stories, with 1 ticket remaining in Test and 1 in Progress.

Team Skynet had 11 completed stories, with 1 ticket in Progress.

Initial velocity summary

| Team Butlerian | Team Skynet | |

| Completed Stories | 9 | 11 |

| Velocity * | 1.50 | 1.83 |

*Velocity is calculated as the number of completed stories divided by the number of days (6)

If we do some math…

(1.83 – 1.5) / 1.5 * 100 = 22

A 22% increase in velocity with the use of AI tools. So… the AI team won, right?

Well, yes and no. The answer is a lot more nuanced.

Analyzing the results

The next couple of days after development was over, everyone who participated in the case study spent some time reflecting on their experience in writing and discussion meetings.

Source: Giflytics on Giphy

After some consideration, we knew we couldn’t simply divide the numbers and arrive at a percentage increase in velocity from AI. There were a lot of variables at play and the qualitative data that was gathered from team members needed to be considered. All this feedback led us to want to investigate code quality. Although AI seemed to increase velocity, did it have any effects on the quality of the codebase? We approached this comparison from a few different angles:

- What was the code coverage for unit tests, and were they all passing?

- What was the code coverage for UI tests, and were they all passing?

- How reusable and maintainable was the code? (This one could be a bit subjective.)

- How many UI bugs were found in the final product, and what was their severity?

Each team had slightly different test coverage and ways of keeping the code maintainable and reusable, but overall the codebases were similar in these respects. However, we found that Team Skynet had a few more minor bugs from a user experience standpoint. There could be various factors at play here, such as rushing towards the end because of the time constraint, willingness to experiment with new approaches with the help of AI, and simply the possibility of more bugs being introduced with the completion of extra stories by Team Skynet. Although the bugs are not necessarily attributable to AI, developers may want to consider the potential tradeoff between increased velocity and the need for additional troubleshooting to maintain quality.

After assessing the bugs and estimating the time it would take to fix them, the adjusted velocities were 1.406 for Team Butlerian and 1.506 for Team Skynet.

Comparison summary

| Team Butlerian | Team Skynet | |

| Unit Test Coverage | 83% | 93% |

| UI Test Coverage | 85% | 77% |

| UI Bugs | 🪲 | 🪲🪲🪲🪲 |

| Adjusted Velocity | 1.406 | 1.506 |

Where did AI come in handy? And where not so much?

Another important takeaway we hoped to get from this case study was determining where AI is most useful, and which tools might be fit for different types of tasks. The dev diaries of Team Skynet provided the best insights here. Throughout the project, Team Skynet documented their experiences using AI for different tasks and summarized their findings based on the task’s risk and value. These are the tools they used:

| GitHub Copilot | Chat-GPT | Phind |

| A code completion AI tool integrated with development environments such as VS Code | A conversational large language model (LLM) created by OpenAI | An AI search engine for developers that shows where answers came from (ex. Stack Overflow posts) |

GitHub Copilot

According to the team, GitHub Copilot was the most useful. It had low risk and provided high value. Although not all suggestions were accurate, engineers still found that it sped up their development process significantly. They were impressed with Copilot’s ability to suggest multiple lines of code, sometimes before they even knew they would need it. Although Copilot wouldn’t be able to code a whole app on its own, they found it immensely helpful for getting through boilerplate and monotonous tasks.

For testing, Team Skynet’s test engineer reported GitHub Copilot being able to take over test-writing after just a few examples. Using just the name for a test, Copilot was able to autocomplete the full test code pretty much on its own. While in many cases no corrections were needed, there were also times when Copilot was just a little bit off, just enough to fool the team and cause confusion later on.

Chat-GPT

The team classified Chat-GPT as having medium-high risk, and medium-low value. They were often led astray by the tool, which tended to “hallucinate” answers when it didn’t have the knowledge needed to answer a question. They noticed that Chat-GPT would also overcomplicate answers sometimes, for example telling developers they need to install multiple dependency packages, when they really only needed a few. One team member mentioned wanting to double-check the LLM’s answers against their own Google searches afterward.

The LLM’s knowledge cutoff date of September 2021 was also a significant barrier when the team wanted to learn about new technologies. For example, developers wanted to integrate Expo Router4 for their navigation setup, but Chat-GPT had no knowledge of the tool and was unable to help. Overall, the developers felt that Chat-GPT would not be their go-to source of information for technologies that the model wasn’t trained on. They mentioned still going to docs as their primary source.

Despite the downsides, Chat-GPT proved very helpful for introducing engineers to well-established tools they were unfamiliar with. Team Skynet’s test engineer felt more empowered to help troubleshoot and solve issues that developers came across despite having no prior knowledge of React Native. Having Chat-GPT quickly provide background knowledge and potential solutions helped him to feel more comfortable participating in discussions. Without the tool, he believed he’d have spent much more time researching solutions. However, he also experienced trouble with Chat-GPT when it didn’t have enough information on the relatively new testing framework Deox, and it hallucinated fake solutions.

Phind

Compared to Chat-GPT, the team felt that Phind was more transparent and reliable. They classified it as medium risk and medium value, better than Chat-GPT for providing answers to their questions. Because of this, they ended up using it more often throughout the project when researching answers to complex problems.

AI tools risk/value chart

| Tool | Risk | Value |

| Github Copilot | Low | High |

| Phind | Medium | Medium |

| Chat-GPT | Medium-High | Medium-Low |

Some confounding variables

Having shared the results, it’s important to mention some variables that may have influenced them. Due to the nature of the case study, it wasn’t possible to control for all external factors, although some of them could potentially be mitigated in future iterations.

- Team-member experience – Although teams were fairly matched, each person had different levels of experience with React Native and other tools used in rebuilding the weather app. Seniority levels also differed, especially between the test engineers.

- Package choices – Team Skynet mentioned feeling empowered to try new packages as part of their development process because of these AI tools. This, as well as personal preferences, led the two teams to use different packages for implementing features like navigation, theming, and local storage. We therefore came across different challenges in implementing these features.

- Teamwork style – Team Skynet reported mobbing throughout most of the project, while Team Butlerian engineers worked independently, coming together to troubleshoot or clear up uncertainties. This could have also affected the amount of time each team spent per ticket, and the overall amount of work completed.

- Level of familiarity with AI tools – An engineer on Team Skynet mentioned that it would have helped to be more familiar with prompt engineering in order to really make the most out of these tools. Engineers had no prior training in this area.

- Inter-team interactions – Teams shared the same standup, and were therefore able to see each other’s progress. This, combined with the time tracking constraint, may have affected the speed and quality of team members’ work.

Possible improvements for case study 2.0

During our retro, the teams also discussed ideas that could potentially make a future iteration of the case study more closely resemble a client project.

- Allocating additional time to flesh out acceptance criteria for each ticket. This could have minimized the amount of uncertainty that came up as development work progressed.

- Having a repo set up beforehand, which teams could clone and start working from. Setting up a repo could do a couple of things: 1) encourage teams to use the same setup and packages (making results more comparable), and 2) add more context from which tools like GitHub Copilot pull information for providing suggestions.

- If possible, extend the timeline for the project. This project felt very short with only six days of development. Over a longer period of time, more solid conclusions could be drawn.

- Including additional disciplines. During the project, teams had numerous questions about Figma assets and the expected user experience once we dug into the details of more complex tickets. A designer would have been able to really help here, where our AI tools couldn’t. There is also the potential for seeing how AI can help other disciplines, including designers and product architects.

TLDR: Overall takeaways

So that was a lot. What should you really take away from this? What did we learn from the AI study this time around?

The team that used AI tools (Github Copilot, Chat-GPT, and Phind) did see an increase in velocity. They found that Github Copilot was the most useful tool for their daily tasks, significantly speeding up velocity by autocompleting tests and tedious, boilerplate code. They also found Chat-GPT helpful, particularly when using it to learn about an established technology. However, Chat-GPT hallucinated answers and lacked up-to-date knowledge. For more accurate responses to complex questions, the team preferred the developer search engine Phind. And even more so, they referred to official docs as the ultimate source of truth.

One quote from the Team Skynet dev journal stood out to me as a good summary of our learnings: “AI is helpful for getting started on something, but not helpful for finishing. To fully deliver a high-quality end product/result, you need someone with expertise” (Summit Patel). AI can speed up the development process, but it still takes a developer to find bugs in generated code, architect complex solutions, and stay on top of new developments. AI may become able to do these things in the future, but for now, using it still poses tradeoffs. These tools should be used with an open mind, but a dose of skepticism. Nevertheless, it’s an exciting horizon, and with the rapidly evolving state of AI, we look forward to following up with a second run at this case study in the future. Thanks for reading!

Footnotes

- In the Terminator movie, Skynet is a general super-AI that causes the downfall of humanity ↩︎

- Butlerian – In reference to Dune: The Butlerian Jihad, a novel by Brian Herbert and Kevin J. Anderson, the Butlerian Jihad is a crusade against “thinking machines” to free humans ↩︎

- Expo – a platform that provides tools and services to make React Native development quicker and simpler ↩︎

- Expo Router – a file-based router for React Native applications ↩︎